GuidedVLA: Specifying Task-Relevant Factors via Plug-and-Play Action Attention Specialization

Published in Robotics: Science and Systems (RSS) 2026, 2026

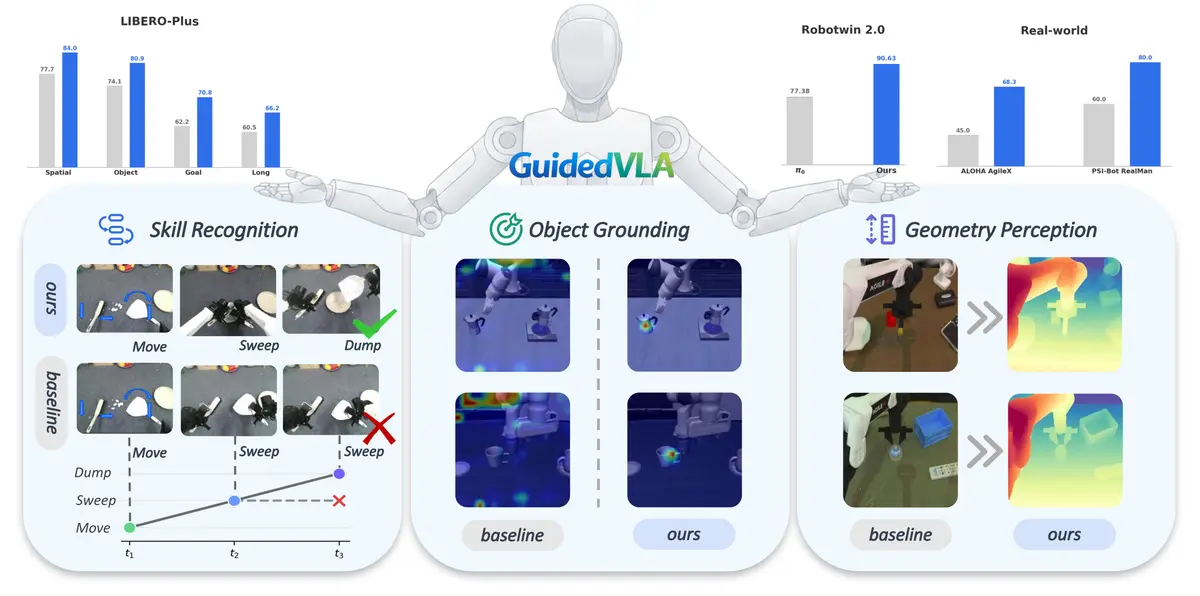

GuidedVLA is a framework for building more robust and interpretable Vision-Language-Action models by explicitly guiding the action decoder to capture task-relevant factors. We specialize action-decoder attention heads toward object grounding, temporal skill logic, and spatial geometry, improving generalization across simulation benchmarks and real-robot tasks.

Recommended citation: Xiaosong Jia*, Bowen Yang*, Zuhao Ge*, Xian Nie*, Yuchen Zhou*, Cunxin Fan*, et al. (2026). "GuidedVLA: Specifying Task-Relevant Factors via Plug-and-Play Action Attention Specialization." Robotics: Science and Systems (RSS).

Resources: Project | Paper | arXiv | Code | Checkpoint | Dataset | BibTeX